Big Data, n.: the belief that any sufficiently large pile of s— contains a pony.

In a recent Washington Post article, Samuel Arbesman shared the tweet above and debunked Five Myths about Big Data including the misconceived notion that “Bigger data is better. ”

“In science, some admittedly mind-blowing big-data analyses are being done. In business, companies are being told to ‘embrace big data before your competitors do.’ But big data is not automatically better. Really big datasets can be a mess. Unless researchers and analysts can reduce the number of variables and make the data more manageable, they get quantity without a whole lot of quality. Give me some quality medium data over bad big data any day”

The Data Warehousing Institute estimates that poor data quality costs American businesses SIX HUNDRED BILLION DOLLARS ANNUALLY – Got your attention? And there’s no reason to believe that associations aren’t carrying a proportionate amount of that loss. Over the last 30 years, I’ve worked with dozens of associations and from last century’s index cards to this century’s electronic records, I have yet to see a truly clean association dataset.

So what does exactly does it mean to have high quality data? Definitions abound, but here’s one that goes to the heart of the issue:

Data are of high quality “if they are fit for their intended uses in operations, decision making and planning” (J. M. Juran).

In other words, the quality is defined by how the data will be used, not necessarily the specific attributes of the data itself. With that context in mind, David Bowman recommends eight Quality Data Management Objectives:

- Accessibility – retrieved as needed by appropriate

- Accuracy – exact and precise

- Completeness – fulfilling formal requirements and expectations with no gaps

- Timeliness – current and not outdated or obsolete

- Integrity – what was requested and expected

- Validity – obtained via an approval process

- Consistency – commonly defined and used across the enterprise

- Relevance – fits intended use

All too often in associations, and I’m sure in business generally, data quality management gets nowhere near the attention it deserves because it’s so hard to put a hard dollar amount on its value. Oh, and by the way, managing data quality can be about as tedious and boring as a job can get. These two shortcomings usually cause the C-Suite folks to ignore it and the rest of the staff to cringe when it comes up.

But, as noted above there are both quantitative and qualitative costs associated with dirty data.

- Bad mailing addresses and undetected duplicate mailing addresses (and we’ve all seen them in our mailbox – over and over and …) mean wasted production and postage along with additional handling costs that continue to drain dollars.

- Bad email addresses, especially when unpurged, result in blacklisting by firms like SpamCop, Barracuda, etc. when, in turn, generate hundreds if not thousands of rejections by network filters which rely on these reputational lists as a first line of defense against spam.

- Misspelled names show up in member correspondence, on name badges, etc. leaving a bad impression and potentially tipping the “at-risk” member into the pool of non-members.

- Inaccurately or incompletely captured transactions generate incorrect charges, inflated or deflated counts, charge-backs and higher rates from merchant card services, etc.

- Incorrect or incomplete demographics often mean missed sales opportunities, lost members and more.

- The list goes on and on and …

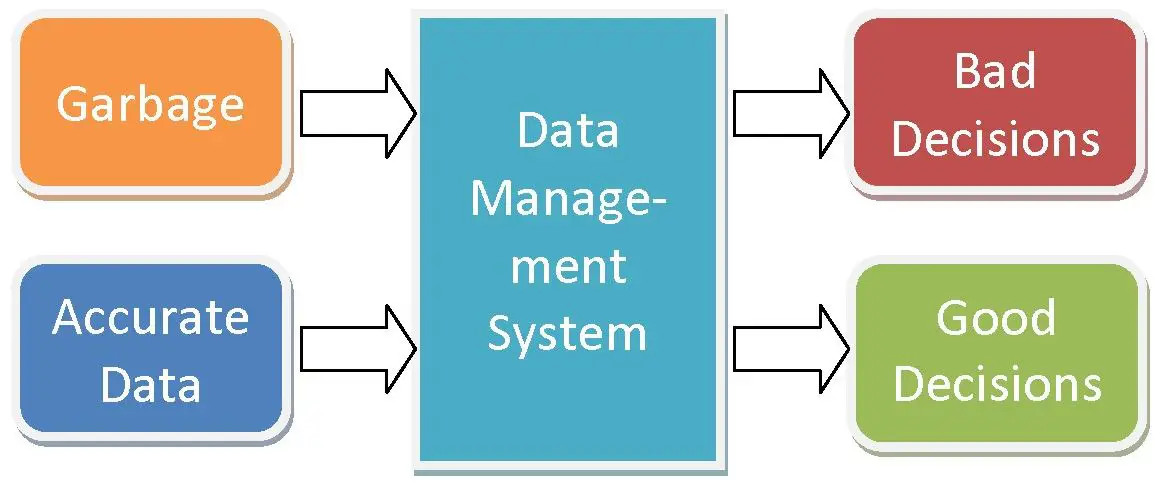

But the most serious consequence, and perhaps the most difficult to quantify, is the impact of poor data quality on organizational decision-making.

So how do you solve this problem? In Best practices: For the want of a nail the kingdom was lost…Mother Goose was right: Profit by Best (Data Quality) Practices, Virginia Prevosto offers nine elements that should be part of data quality management, but it all starts with recognition and ownership of the problem. Most of the experts recommend a cross-departmental data governance team whose sole role in the association is to be responsible for data quality. This is not an IT problem; it’s an organizational problem which requires an organizational response.

Here are the data tools for improving data highlighted in Wikipedia:

- Data profiling – initially assessing the data to understand its quality challenges

- Data standardization – a business rules engine that ensures that data conforms to quality rules

- Geocoding – for name and address data. Corrects data to US and Worldwide postal standards

- Matching or Linking – a way to compare data so that similar, but slightly different records can be aligned. Matching may use “fuzzy logic” to find duplicates in the data. It often recognizes that ‘Bob’ and ‘Robert’ may be the same individual. It might be able to manage ‘householding’, or finding links between husband and wife at the same address, for example. Finally, it often can build a ‘best of breed’ record, taking the best components from multiple data sources and building a single super-record.

- Monitoring – keeping track of data quality over time and reporting variations in the quality of data. Software can also auto-correct the variations based on pre-defined business rules.

- Batch and Real time – Once the data is initially cleansed (batch), companies often want to build the processes into enterprise applications to keep it clean.

Much like a successful exercise or weight loss program, a sustainable data quality management program starts small, but works away at the problem every day, day in day out, until data quality is a deeply ingrained institutional habit shared by every employee from the C-suite to the mail room…the ultimate benefit being better decision-making at all levels of the association.

Additional Resources/References